The network layer¶

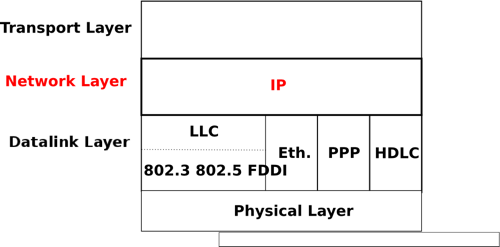

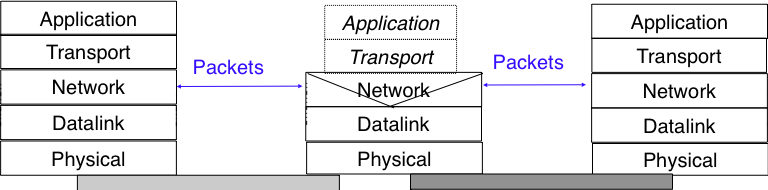

The transport layer enables the applications to efficiently and reliably exchange data. Transport layer entities expect to be able to send segment to any destination without having to understand anything about the underlying subnetwork technologies. Many subnetwork technologies exist. Most of them differ in subtle details (frame size, addressing, ...). The network layer is the glue between these subnetworks and the transport layer. It hides to the transport layer all the complexity of the underlying subnetworks and ensures that information can be exchanged between hosts connected to different types of subnetworks.

In this chapter, we first explain the principles of the network layer. These principles include the datagram and virtual circuit modes, the separation between the data plane and the control plane and the algorithms used by routing protocols. Then, we explain, in more detail, the network layer in the Internet, starting with IPv4 and IPv6 and then moving to the routing protocols (RIP, OSPF and BGP).

Principles¶

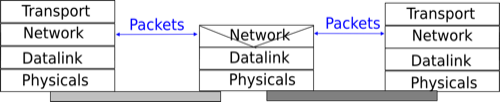

The main objective of the network layer is to allow endsystems, connected to different networks, to exchange information through intermediate systems called router. The unit of information in the network layer is called a packet.

Before explaining the network layer in detail, it is useful to begin by analysing the service provided by the datalink layer. There are many variants of the datalink layer. Some provide a connection-oriented service while others provide a connectionless service. In this section, we focus on connectionless datalink layer services as they are the most widely used. Using a connection-oriented datalink layer causes some problems that are beyond the scope of this chapter. See RFC 3819 for a discussion on this topic.

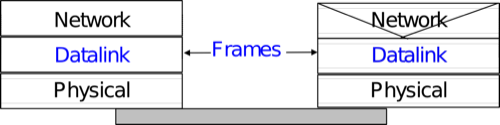

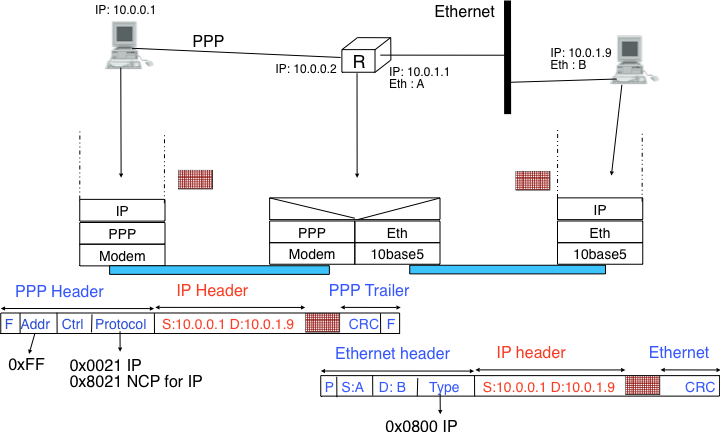

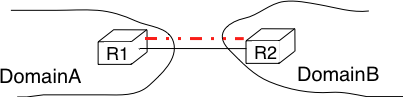

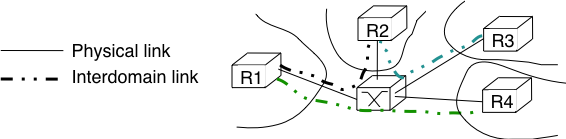

There are three main types of datalink layers. The simplest datalink layer is when there are only two communicating systems that are directly connected through the physical layer. Such a datalink layer is used when there is a point-to-point link between the two communicating systems. The two systems can be endsystems or routers. PPP, defined in RFC 1661, is an example of such a point-to-point datalink layer. Datalink layers exchange frames and a datalink frame sent by a datalink layer entity on the left is transmitted through the physical layer, so that it can reach the datalink layer entity on the right. Point-to-point datalink layers can either provide an unreliable service (frames can be corrupted or lost) or a reliable service (in this case, the datalink layer includes retransmission mechanisms similar to the ones used in the transport layer). The unreliable service is frequently used above physical layers (e.g. optical fiber, twisted pairs) having a low bit error ratio while reliability mechanisms are often used in wireless networks to recover locally from transmission errors.

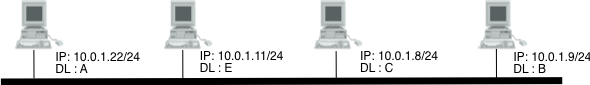

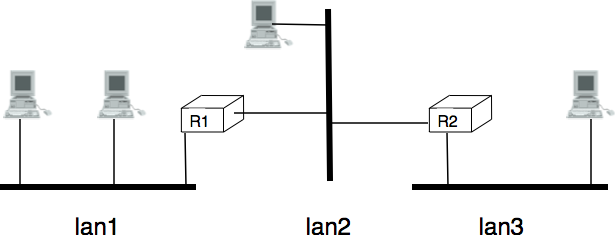

The second type of datalink layer is the one used in Local Area Networks (LAN). Conceptually, a LAN is a set of communicating devices such that any two devices can directly exchange frames through the datalink layer. Both endsystems and routers can be connected to a LAN. Some LANs only connect a few devices, but there are LANs that can connect hundreds or even thousands of devices.

In the next chapter, we describe the organisation and the operation of Local Area Networks. An important difference between the point-to-point datalink layers and the datalink layers used in LANs is that in a LAN, each communicating device is identified by a unique datalink layer address. This address is usually embedded in the hardware of the device and different types of LANs use different types of datalink layer addresses. A communicating device attached to a LAN can send a datalink frame to any other communicating device that is attached to the same LAN. Most LANs also support special broadcast and multicast datalink layer addresses. A frame sent to the broadcast address of the LAN is delivered to all communicating devices that are attached to the LAN. The multicast addresses are used to identify groups of communicating devices. When a frame is sent towards a multicast datalink layer address, it is delivered by the LAN to all communicating devices that belong to the corresponding group.

The third type of datalink layers are used in Non-Broadcast Multi-Access (NBMA) networks. These networks are used to interconnect devices like a LAN. All devices attached to an NBMA network are identified by a unique datalink layer address. However, and this is the main difference between an NBMA network and a traditional LAN, the NBMA service only supports unicast. The datalink layer service provided by an NBMA network supports neither broadcast nor multicast.

Unfortunately no datalink layer is able to send frames of unlimited side. Each datalink layer is characterised by a maximum frame size. There are more than a dozen different datalink layers and unfortunately most of them use a different maximum frame size. The network layer must cope with the heterogeneity of the datalink layer.

The network layer itself relies on the following principles :

- Each network layer entity is identified by a network layer address. This address is independent of the datalink layer addresses that it may use.

- The service provided by the network layer does not depend on the service or the internal organisation of the underlying datalink layers.

- The network layer is conceptually divided into two planes : the data plane and the control plane. The data plane contains the protocols and mechanisms that allow hosts and routers to exchange packets carrying user data. The control plane contains the protocols and mechanisms that enable routers to efficiently learn how to forward packets towards their final destination.

The independence of the network layer from the underlying datalink layer is a key principle of the network layer. It ensures that the network layer can be used to allow hosts attached to different types of datalink layers to exchange packets through intermediate routers. Furthermore, this allows the datalink layers and the network layer to evolve independently from each other. This enables the network layer to be easily adapted to a new datalink layer every time a new datalink layer is invented.

There are two types of service that can be provided by the network layer :

- an unreliable connectionless service

- a connection-oriented, reliable or unreliable, service

Connection-oriented services have been popular with technologies such as X.25 and ATM or frame-relay, but nowadays most networks use an unreliable connectionless service. This is our main focus in this chapter.

Organisation of the network layer¶

There are two possible internal organisations of the network layer :

- datagram

- virtual circuits

The internal organisation of the network is orthogonal to the service that it provides, but most of the time a datagram organisation is used to provide a connectionless service while a virtual circuit organisation is used in networks that provide a connection-oriented service.

Datagram organisation¶

The first and most popular organisation of the network layer is the datagram organisation. This organisation is inspired by the organisation of the postal service. Each host is identified by a network layer address. To send information to a remote host, a host creates a packet that contains :

- the network layer address of the destination host

- its own network layer address

- the information to be sent

The network layer limits the maximum packet size. Thus, the information must have been divided in packets by the transport layer before being passed to the network layer.

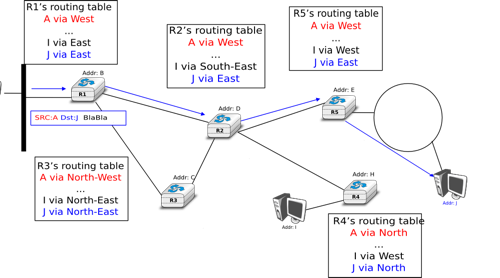

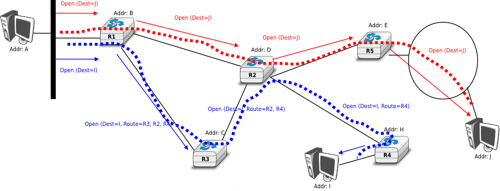

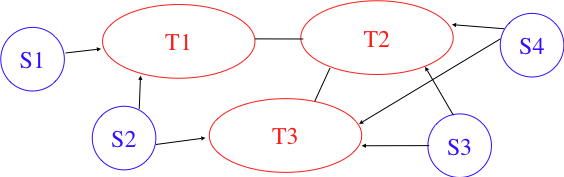

To understand the datagram organisation, let us consider the figure below. A network layer address, represented by a letter, has been assigned to each host and router. To send some information to host J, host A creates a packet containing its own address, the destination address and the information to be exchanged.

With the datagram organisation, routers use hop-by-hop forwarding. This means that when a router receives a packet that is not destined to itself, it looks up the destination address of the packet in its routing table. A routing table is a data structure that maps each destination address (or set of destination addresses) to the outgoing interface over which a packet destined to this address must be forwarded to reach its final destination.

The main constraint imposed on the routing tables is that they must allow any host in the network to reach any other host. This implies that each router must know a route towards each destination, but also that the paths composed from the information stored in the routing tables must not contain loops. Otherwise, some destinations would be unreachable.

In the example above, host A sends its packet to router R1. R1 consults its routing table and forwards the packet towards R2. Based on its own routing table, R2 decides to forward the packet to R5 that can deliver it to its destination.

To allow hosts to exchange packets, a network relies on two different types of protocols and mechanisms. First, there must be a precise definition of the format of the packets that are sent by hosts and processed by routers. Second, the algorithm used by the routers to forward these packets must be defined. This protocol and this algorithm are part of the data plane of the network layer. The data plane contains all the protocols and algorithms that are used by hosts and routers to create and process the packets that contain user data.

The data plane, and in particular the forwarding algorithm used by the routers, depends on the routing tables that are maintained on reach router. These routing tables can be maintained by using various techniques (manual configuration, distributed protocols, centralised computation, etc). These techniques are part of the control plane of the network layer. The control plane contains all the protocols and mechanisms that are used to compute and install routing tables on the routers.

The datagram organisation has been very popular in computer networks. Datagram based network layers include IPv4 and IPv6 in the global Internet, CLNP defined by the ISO, IPX defined by Novell or XNS defined by Xerox [Perlman2000].

Virtual circuit organisation¶

The main advantage of the datagram organisation is its simplicity. The principles of this organisation can easily be understood. Furthermore, it allows a host to easily send a packet towards any destination at any time. However, as each packet is forwarded independently by intermediate routers, packets sent by a host may not follow the same path to reach a given destination. This may cause packet reordering, which may be annoying for transport protocols. Furthermore, as a router using hop-by-hop forwarding always forwards packets sent towards the same destination over the same outgoing interface, this may cause congestion over some links.

The second organisation of the network layer, called virtual circuits, has been inspired by the organisation of telephone networks. Telephone networks have been designed to carry phone calls that usually last a few minutes. Each phone is identified by a telephone number and is attached to a telephone switch. To initiate a phone call, a telephone first needs to send the destination’s phone number to its local switch. The switch cooperates with the other switches in the network to create a bi-directional channel between the two telephones through the network. This channel will be used by the two telephones during the lifetime of the call and will be released at the end of the call. Until the 1960s, most of these channels were created manually, by telephone operators, upon request of the caller. Today’s telephone networks use automated switches and allow several channels to be carried over the same physical link, but the principles remain roughly the same.

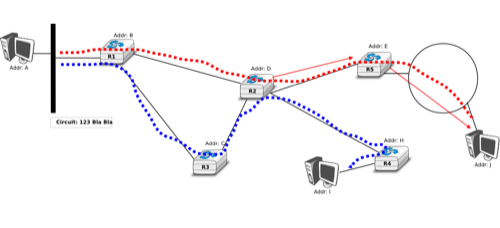

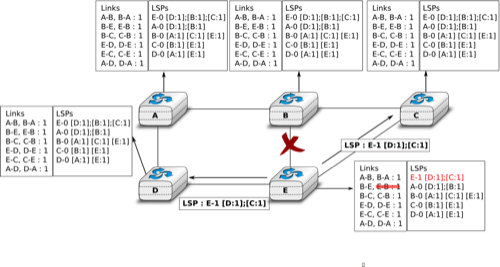

In a network using virtual circuits, all hosts are identified with a network layer address. However, a host must explicitly request the establishment of a virtual circuit before being able to send packets to a destination host. The request to establish a virtual circuit is processed by the control plane, which installs state to create the virtual circuit between the source and the destination through intermediate routers. All the packets that are sent on the virtual circuit contain a virtual circuit identifier that allows the routers to determine to which virtual circuit each packet belongs. This is illustrated in the figure below with one virtual circuit between host A and host I and another one between host A and host J.

The establishment of a virtual circuit is performed using a signalling protocol in the control plane. Usually, the source host sends a signalling message to indicate to its router the address of the destination and possibly some performance characteristics of the virtual circuit to be established. The first router can process the signalling message in two different ways.

A first solution is for the router to consult its routing table, remember the characteristics of the requested virtual circuit and forward it over its outgoing interface towards the destination. The signalling message is thus forwarded hop-by-hop until it reaches the destination and the virtual circuit is opened along the path followed by the signalling message. This is illustrated with the red virtual circuit in the figure below.

A second solution can be used if the routers know the entire topology of the network. In this case, the first router can use a technique called source routing. Upon reception of the signalling message, the first router chooses the path of the virtual circuit in the network. This path is encoded as the list of the addresses of all intermediate routers to reach the destination. It is included in the signalling message and intermediate routers can remove their address from the signalling message before forwarding it. This technique enables routers to spread the virtual circuits throughout the network better. If the routers know the load of remote links, they can also select the least loaded path when establishing a virtual circuit. This solution is illustrated with the blue circuit in the figure above.

The last point to be discussed about the virtual circuit organisation is its data plane. The data plane mainly defines the format of the data packets and the algorithm used by routers to forward packets. The data packets contain a virtual circuit identifier, encoded as a fixed number of bits. These virtual circuit identifiers are usually called labels.

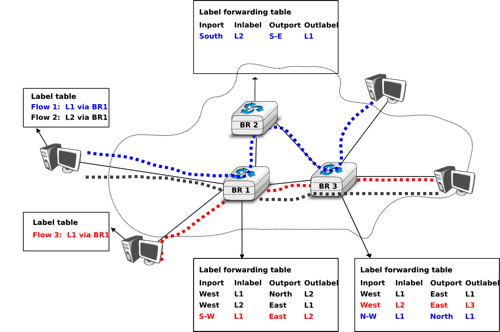

Each host maintains a flow table that associates a label with each virtual circuit that is has established. When a router receives a packet containing a label, it extracts the label and consults its label forwarding table. This table is a data structure that maps each couple (incoming interface, label) to the outgoing interface to be used to forward the packet as well as the label that must be placed in the outgoing packets. In practice, the label forwarding table can be implemented as a vector and the couple (incoming interface, label) is the index of the entry in the vector that contains the outgoing interface and the outgoing label. Thus a single memory access is sufficient to consult the label forwarding table. The utilisation of the label forwarding table is illustrated in the figure below.

The virtual circuit organisation has been mainly used in public networks, starting from X.25 and then Frame Relay and Asynchronous Transfer Mode (ATM) network.

Both the datagram and virtual circuit organisations have advantages and drawbacks. The main advantage of the datagram organisation is that hosts can easily send packets to any number of destinations while the virtual circuit organisation requires the establishment of a virtual circuit before the transmission of a data packet. This solution can be costly for hosts that exchange small amounts of data. On the other hand, the main advantage of the virtual circuit organisation is that the forwarding algorithm used by routers is simpler than when using the datagram organisation. Furthermore, the utilisation of virtual circuits may allow the load to be better spread through the network thanks to the utilisation of multiple virtual circuits. The MultiProtocol Label Switching (MPLS) technique that we will discuss in another revision of this book can be considered as a good compromise between datagram and virtual circuits. MPLS uses virtual circuits between routers, but does not extend them to the endhosts. Additional information about MPLS may be found in [ML2011].

The control plane¶

One of the objectives of the control plane in the network layer is to maintain the routing tables that are used on all routers. As indicated earlier, a routing table is a data structure that contains, for each destination address (or block of addresses) known by the router, the outgoing interface over which the router must forward a packet destined to this address. The routing table may also contain additional information such as the address of the next router on the path towards the destination or an estimation of the cost of this path.

In this section, we discuss the three main techniques that can be used to maintain the routing tables in a network.

Static routing¶

The simplest solution is to pre-compute all the routing tables of all routers and to install them on each router. Several algorithms can be used to compute these tables.

A simple solution is to use shortest path routing and to minimise the number of intermediate routers to reach each destination. More complex algorithms can take into account the expected load on the links to ensure that congestion does not occur for a given traffic demand. These algorithms must all ensure that :

- all routers are configured with a route to reach each destination

- none of the paths composed with the entries found in the routing tables contain a cycle. Such a cycle would lead to a forwarding loop.

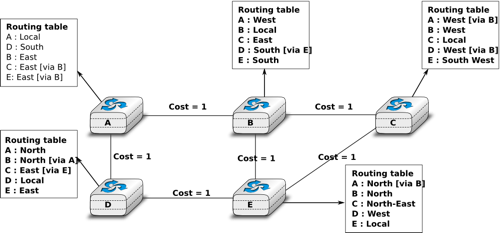

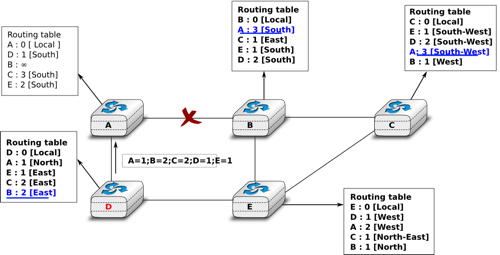

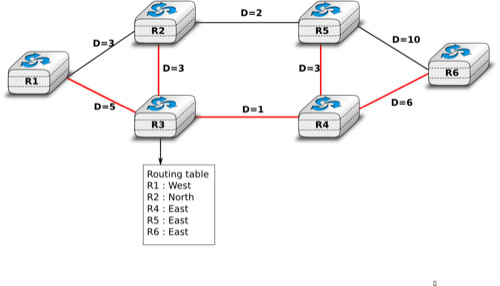

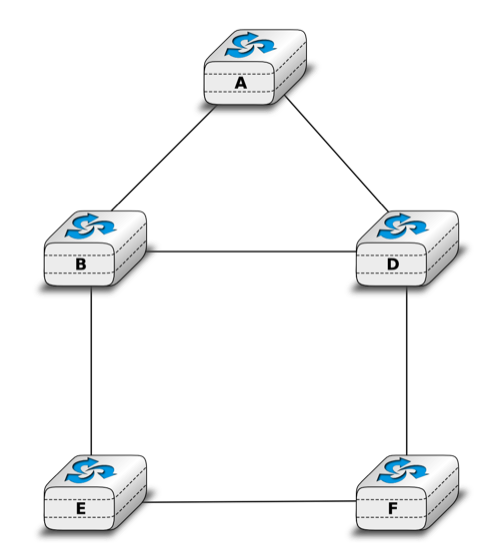

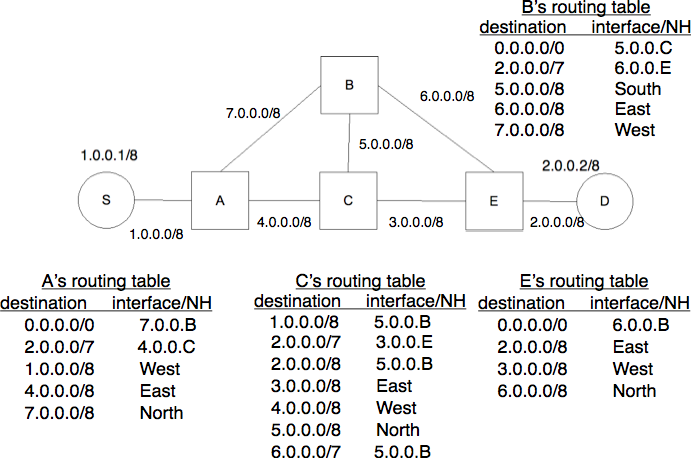

The figure below shows sample routing tables in a five routers network.

The main drawback of static routing is that it does not adapt to the evolution of the network. When a new router or link is added, all routing tables must be recomputed. Furthermore, when a link or router fails, the routing tables must be updated as well.

Distance vector routing¶

Distance vector routing is a simple distributed routing protocol. Distance vector routing allows routers to automatically discover the destinations reachable inside the network as well as the shortest path to reach each of these destinations. The shortest path is computed based on metrics or costs that are associated to each link. We use l.cost to represent the metric that has been configured for link l on a router.

Each router maintains a routing table. The routing table R can be modelled as a data structure that stores, for each known destination address d, the following attributes :

- R[d].link is the outgoing link that the router uses to forward packets towards destination d

- R[d].cost is the sum of the metrics of the links that compose the shortest path to reach destination d

- R[d].time is the timestamp of the last distance vector containing destination d

A router that uses distance vector routing regularly sends its distance vector over all its interfaces. The distance vector is a summary of the router’s routing table that indicates the distance towards each known destination. This distance vector can be computed from the routing table by using the pseudo-code below.

Every N seconds:

v=Vector()

for d in R[]:

# add destination d to vector

v.add(Pair(d,R[d].cost))

for i in interfaces

# send vector v on this interface

send(v,interface)

When a router boots, it does not know any destination in the network and its routing table only contains itself. It thus sends to all its neighbours a distance vector that contains only its address at a distance of 0. When a router receives a distance vector on link l, it processes it as follows.

# V : received Vector

# l : link over which vector is received

def received(V,l):

# received vector from link l

for d in V[]

if not (d in R[]) :

# new route

R[d].cost=V[d].cost+l.cost

R[d].link=l

R[d].time=now

else :

# existing route, is the new better ?

if ( ((V[d].cost+l.cost) < R[d].cost) or ( R[d].link == l) ) :

# Better route or change to current route

R[d].cost=V[d].cost+l.cost

R[d].link=l

R[d].time=now

The router iterates over all addresses included in the distance vector. If the distance vector contains an address that the router does not know, it inserts the destination inside its routing table via link l and at a distance which is the sum between the distance indicated in the distance vector and the cost associated to link l. If the destination was already known by the router, it only updates the corresponding entry in its routing table if either :

- the cost of the new route is smaller than the cost of the already known route ( (V[d].cost+l.cost) < R[d].cost)

- the new route was learned over the same link as the current best route towards this destination ( R[d].link == l)

The first condition ensures that the router discovers the shortest path towards each destination. The second condition is used to take into account the changes of routes that may occur after a link failure or a change of the metric associated to a link.

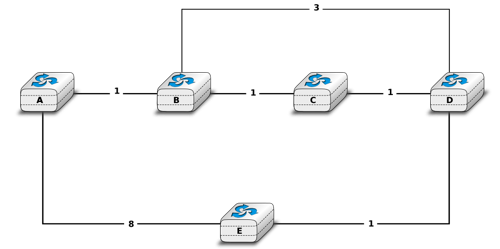

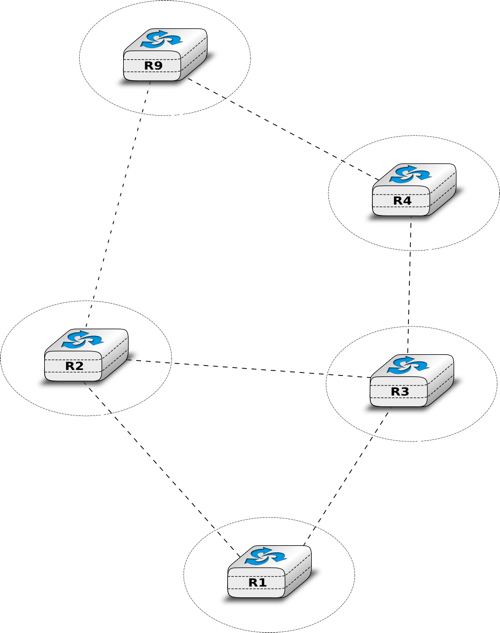

To understand the operation of a distance vector protocol, let us consider the network of five routers shown below.

Assume that A is the first to send its distance vector [A=0].

- B and D process the received distance vector and update their routing table with a route towards A.

- D sends its distance vector [D=0,A=1] to A and E. E can now reach A and D.

- C sends its distance vector [C=0] to B and E

- E sends its distance vector [E=0,D=1,A=2,C=2] to D, B and C. B can now reach A, C, D and E

- B sends its distance vector [B=0,A=1,C=1,D=2,E=1] to A, C and E. A, B, C and E can now reach all destinations.

- A sends its distance vector [A=0,B=1,C=2,D=1,E=2] to B and D.

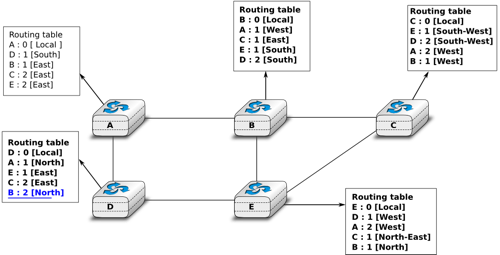

At this point, all routers can reach all other routers in the network thanks to the routing tables shown in the figure below.

To deal with link and router failures, routers use the timestamp stored in their routing table. As all routers send their distance vector every N seconds, the timestamp of each route should be regularly refreshed. Thus no route should have a timestamp older than N seconds, unless the route is not reachable anymore. In practice, to cope with the possible loss of a distance vector due to transmission errors, routers check the timestamp of the routes stored in their routing table every N seconds and remove the routes that are older than  seconds. When a router notices that a route towards a destination has expired, it must first associate an

seconds. When a router notices that a route towards a destination has expired, it must first associate an  cost to this route and send its distance vector to its neighbours to inform them. The route can then be removed from the routing table after some time (e.g.

cost to this route and send its distance vector to its neighbours to inform them. The route can then be removed from the routing table after some time (e.g.  seconds), to ensure that the neighbouring routers have received the bad news, even if some distance vectors do not reach them due to transmission errors.

seconds), to ensure that the neighbouring routers have received the bad news, even if some distance vectors do not reach them due to transmission errors.

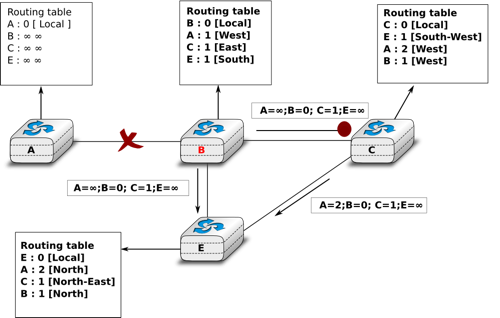

Consider the example above and assume that the link between routers A and B fails. Before the failure, A used B to reach destinations B, C and E while B only used the A-B link to reach A. The affected entries timeout on routers A and B and they both send their distance vector.

- A sends its distance vector

. D knows that it cannot reach B anymore via A

- D sends its distance vector

to A and E. A recovers routes towards C and E via D.

- B sends its distance vector

to E and C. D learns that there is no route anymore to reach A via B.

- E sends its distance vector

to D, B and C. D learns a route towards B. C and B learn a route towards A.

At this point, all routers have a routing table allowing them to reach all another routers, except router A, which cannot yet reach router B. A recovers the route towards B once router D sends its updated distance vector ![[A=1,B=2,C=2,D=1,E=1]](../_images/math/331db65f2d29c53c3ab7f8aed030f3258df7d478.png) . This last step is illustrated in figure Routing tables computed by distance vector after a failure, which shows the routing tables on all routers.

. This last step is illustrated in figure Routing tables computed by distance vector after a failure, which shows the routing tables on all routers.

Consider now that the link between D and E fails. The network is now partitioned into two disjoint parts : (A , D) and (B, E, C). The routes towards B, C and E expire first on router D. At this time, router D updates its routing table.

If D sends ![[D=0, A=1, B=\infty, C=\infty, E=\infty]](../_images/math/d3155926af4b38cbf2344fbe775d461aa3f09ce6.png) , A learns that B, C and E are unreachable and updates its routing table.

, A learns that B, C and E are unreachable and updates its routing table.

Unfortunately, if the distance vector sent to A is lost or if A sends its own distance vector ( ![[A=0,D=1,B=3,C=3,E=2]](../_images/math/1d488629b85327dc46f3644fcc506dd3ea08a115.png) ) at the same time as D sends its distance vector, D updates its routing table to use the shorter routes advertised by A towards B, C and E. After some time D sends a new distance vector :

) at the same time as D sends its distance vector, D updates its routing table to use the shorter routes advertised by A towards B, C and E. After some time D sends a new distance vector : ![[D=0,A=1,E=3,C=4,B=4]](../_images/math/380a400dac2b10048a19feb9e296ac9f850e605e.png) . A updates its routing table and after some time sends its own distance vector

. A updates its routing table and after some time sends its own distance vector ![[A=0,D=1,B=5,C=5,E=4]](../_images/math/1243335620b6c0b56cc8ed816ee2afec556e07ba.png) , etc. This problem is known as the count to infinity problem in networking literature. Routers A and D exchange distance vectors with increasing costs until these costs reach

, etc. This problem is known as the count to infinity problem in networking literature. Routers A and D exchange distance vectors with increasing costs until these costs reach  . This problem may occur in other scenarios than the one depicted in the above figure. In fact, distance vector routing may suffer from count to infinity problems as soon as there is a cycle in the network. Cycles are necessary to have enough redundancy to deal with link and router failures. To mitigate the impact of counting to infinity, some distance vector protocols consider that

. This problem may occur in other scenarios than the one depicted in the above figure. In fact, distance vector routing may suffer from count to infinity problems as soon as there is a cycle in the network. Cycles are necessary to have enough redundancy to deal with link and router failures. To mitigate the impact of counting to infinity, some distance vector protocols consider that  . Unfortunately, this limits the metrics that network operators can use and the diameter of the networks using distance vectors.

. Unfortunately, this limits the metrics that network operators can use and the diameter of the networks using distance vectors.

This count to infinity problem occurs because router A advertises to router D a route that it has learned via router D. A possible solution to avoid this problem could be to change how a router creates its distance vector. Instead of computing one distance vector and sending it to all its neighbors, a router could create a distance vector that is specific to each neighbour and only contains the routes that have not been learned via this neighbour. This could be implemented by the following pseudocode.

Every N seconds:

# one vector for each interface

for l in interfaces:

v=Vector()

for d in R[]:

if (R[d].link != i) :

v=v+Pair(d,R[d.cost])

send(v)

# end for d in R[]

#end for l in interfaces

This technique is called split-horizon. With this technique, the count to infinity problem would not have happened in the above scenario, as router A would have advertised ![[A=0]](../_images/math/bdece69f4c49068789fc9479732c5fdf8d26bb02.png) , since it learned all its other routes via router D. Another variant called split-horizon with poison reverse is also possible. Routers using this variant advertise a cost of

, since it learned all its other routes via router D. Another variant called split-horizon with poison reverse is also possible. Routers using this variant advertise a cost of  for the destinations that they reach via the router to which they send the distance vector. This can be implemented by using the pseudo-code below.

for the destinations that they reach via the router to which they send the distance vector. This can be implemented by using the pseudo-code below.

Every N seconds:

for l in interfaces:

# one vector for each interface

v=Vector()

for d in R[]:

if (R[d].link != i) :

v=v+Pair(d,R[d.cost])

else:

v=v+Pair(d,infinity);

send(v)

# end for d in R[]

#end for l in interfaces

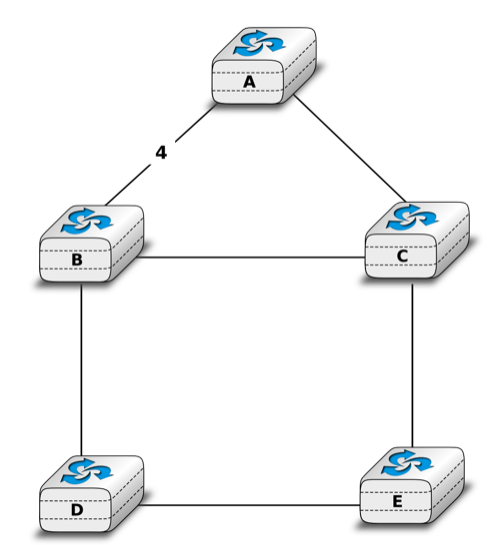

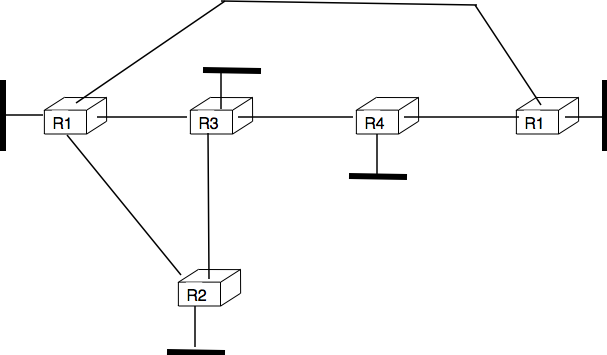

Unfortunately, split-horizon, is not sufficient to avoid all count to infinity problems with distance vector routing. Consider the failure of link A-B in the network of four routers below.

After having detected the failure, router A sends its distance vectors :

to router C

to router E

If, unfortunately, the distance vector sent to router C is lost due to a transmission error or because router C is overloaded, a new count to infinity problem can occur. If router C sends its distance vector ![[A=2,B=1,C=0,E=\infty]](../_images/math/41650051395424177c208956628bec04b8b031e8.png) to router E, this router installs a route of distance 3 to reach A via C. Router E sends its distance vectors

to router E, this router installs a route of distance 3 to reach A via C. Router E sends its distance vectors ![[A=3,B=\infty,C=1,E=1]](../_images/math/bdd7a6b9b2beb9525395ec6630cc71871b136e6a.png) to router B and

to router B and ![[A=\infty,B=1,C=\infty,E=0]](../_images/math/f6266c56fe76ee7c020d4ac1b276d9202b4ffa55.png) to router C. This distance vector allows B to recover a route of distance 4 to reach A.

to router C. This distance vector allows B to recover a route of distance 4 to reach A.

Link state routing¶

Link state routing is the second family of routing protocols. While distance vector routers use a distributed algorithm to compute their routing tables, link-state routers exchange messages to allow each router to learn the entire network topology. Based on this learned topology, each router is then able to compute its routing table by using a shortest path computation [Dijkstra1959].

For link-state routing, a network is modelled as a directed weighted graph. Each router is a node, and the links between routers are the edges in the graph. A positive weight is associated to each directed edge and routers use the shortest path to reach each destination. In practice, different types of weight can be associated to each directed edge :

- unit weight. If all links have a unit weight, shortest path routing prefers the paths with the least number of intermediate routers.

- weight proportional to the propagation delay on the link. If all link weights are configured this way, shortest path routing uses the paths with the smallest propagation delay.

where C is a constant larger than the highest link bandwidth in the network. If all link weights are configured this way, shortest path routing prefers higher bandwidth paths over lower bandwidth paths

Usually, the same weight is associated to the two directed edges that correspond to a physical link (i.e.  and

and  ). However, nothing in the link state protocols requires this. For example, if the weight is set in function of the link bandwidth, then an asymmetric ADSL link could have a different weight for the upstream and downstream directions.

Other variants are possible. Some networks use optimisation algorithms to find the best set of weights to minimize congestion inside the network for a given traffic demand [FRT2002].

). However, nothing in the link state protocols requires this. For example, if the weight is set in function of the link bandwidth, then an asymmetric ADSL link could have a different weight for the upstream and downstream directions.

Other variants are possible. Some networks use optimisation algorithms to find the best set of weights to minimize congestion inside the network for a given traffic demand [FRT2002].

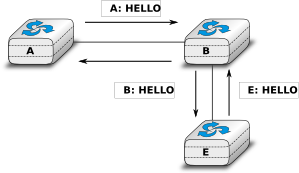

When a link-state router boots, it first needs to discover to which routers it is directly connected. For this, each router sends a HELLO message every N seconds on all of its interfaces. This message contains the router’s address. Each router has a unique address. As its neighbouring routers also send HELLO messages, the router automatically discovers to which neighbours it is connected. These HELLO messages are only sent to neighbours who are directly connected to a router, and a router never forwards the HELLO messages that they receive. HELLO messages are also used to detect link and router failures. A link is considered to have failed if no HELLO message has been received from the neighbouring router for a period of  seconds.

seconds.

Once a router has discovered its neighbours, it must reliably distribute its local links to all routers in the network to allow them to compute their local view of the network topology. For this, each router builds a link-state packet (LSP) containing the following information :

- LSP.Router : identification (address) of the sender of the LSP

- LSP.age : age or remaining lifetime of the LSP

- LSP.seq : sequence number of the LSP

- LSP.Links[] : links advertised in the LSP. Each directed link is represented with the following information : - LSP.Links[i].Id : identification of the neighbour - LSP.Links[i].cost : cost of the link

These LSPs must be reliably distributed inside the network without using the router’s routing table since these tables can only be computed once the LSPs have been received. The Flooding algorithm is used to efficiently distribute the LSPs of all routers. Each router that implements flooding maintains a link state database (LSDB) containing the most recent LSP sent by each router. When a router receives an LSP, it first verifies whether this LSP is already stored inside its LSDB. If so, the router has already distributed the LSP earlier and it does not need to forward it. Otherwise, the router forwards the LSP on all links except the link over which the LSP was received. Reliable flooding can be implemented by using the following pseudo-code.

# links is the set of all links on the router

# Router R's LSP arrival on link l

if newer(LSP, LSDB(LSP.Router)) :

LSDB.add(LSP)

for i in links :

if i!=l :

send(LSP,i)

else:

# LSP has already been flooded

In this pseudo-code, LSDB(r) returns the most recent LSP originating from router r that is stored in the LSDB. newer(lsp1,lsp2) returns true if lsp1 is more recent than lsp2. See the note below for a discussion on how newer can be implemented.

Note

Which is the most recent LSP ?

A router that implements flooding must be able to detect whether a received LSP is newer than the stored LSP. This requires a comparison between the sequence number of the received LSP and the sequence number of the LSP stored in the link state database. The ARPANET routing protocol [MRR1979] used a 6 bits sequence number and implemented the comparison as follows RFC 789

def newer( lsp1, lsp2 ):

return ( ( ( lsp1.seq > lsp2.seq) and ( (lsp1.seq-lsp2.seq)<=32) ) or

( ( lsp1.seq < lsp2.seq) and ( (lsp2.seq-lsp1.seq)> 32) ) )

This comparison takes into account the modulo  arithmetic used to increment the sequence numbers. Intuitively, the comparison divides the circle of all sequence numbers into two halves. Usually, the sequence number of the received LSP is equal to the sequence number of the stored LSP incremented by one, but sometimes the sequence numbers of two successive LSPs may differ, e.g. if one router has been disconnected from the network for some time. The comparison above worked well until October 27, 1980. On this day, the ARPANET crashed completely. The crash was complex and involved several routers. At one point, LSP 40 and LSP 44 from one of the routers were stored in the LSDB of some routers in the ARPANET. As LSP 44 was the newest, it should have replaced by LSP 40 on all routers. Unfortunately, one of the ARPANET routers suffered from a memory problem and sequence number 40 (101000 in binary) was replaced by 8 (001000 in binary) in the buggy router and flooded. Three LSPs were present in the network and 44 was newer than 40 which is newer than 8, but unfortunately 8 was considered to be newer than 44... All routers started to exchange these three link state packets for ever and the only solution to recover from this problem was to shutdown the entire network RFC 789.

arithmetic used to increment the sequence numbers. Intuitively, the comparison divides the circle of all sequence numbers into two halves. Usually, the sequence number of the received LSP is equal to the sequence number of the stored LSP incremented by one, but sometimes the sequence numbers of two successive LSPs may differ, e.g. if one router has been disconnected from the network for some time. The comparison above worked well until October 27, 1980. On this day, the ARPANET crashed completely. The crash was complex and involved several routers. At one point, LSP 40 and LSP 44 from one of the routers were stored in the LSDB of some routers in the ARPANET. As LSP 44 was the newest, it should have replaced by LSP 40 on all routers. Unfortunately, one of the ARPANET routers suffered from a memory problem and sequence number 40 (101000 in binary) was replaced by 8 (001000 in binary) in the buggy router and flooded. Three LSPs were present in the network and 44 was newer than 40 which is newer than 8, but unfortunately 8 was considered to be newer than 44... All routers started to exchange these three link state packets for ever and the only solution to recover from this problem was to shutdown the entire network RFC 789.

Current link state routing protocols usually use 32 bits sequence numbers and include a special mechanism in the unlikely case that a sequence number reaches the maximum value (using a 32 bits sequence number space takes 136 years if a link state packet is generated every second).

To deal with the memory corruption problem, link state packets contain a checksum. This checksum is computed by the router that generates the LSP. Each router must verify the checksum when it receives or floods an LSP. Furthermore, each router must periodically verify the checksums of the LSPs stored in its LSDB.

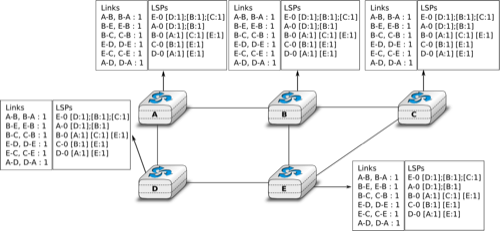

Flooding is illustrated in the figure below. By exchanging HELLO messages, each router learns its direct neighbours. For example, router E learns that it is directly connected to routers D, B and C. Its first LSP has sequence number 0 and contains the directed links E->D, E->B and E->C. Router E sends its LSP on all its links and routers D, B and C insert the LSP in their LSDB and forward it over their other links.

Flooding allows LSPs to be distributed to all routers inside the network without relying on routing tables. In the example above, the LSP sent by router E is likely to be sent twice on some links in the network. For example, routers B and C receive E‘s LSP at almost the same time and forward it over the B-C link. To avoid sending the same LSP twice on each link, a possible solution is to slightly change the pseudo-code above so that a router waits for some random time before forwarding a LSP on each link. The drawback of this solution is that the delay to flood an LSP to all routers in the network increases. In practice, routers immediately flood the LSPs that contain new information (e.g. addition or removal of a link) and delay the flooding of refresh LSPs (i.e. LSPs that contain exactly the same information as the previous LSP originating from this router) [FFEB2005].

To ensure that all routers receive all LSPs, even when there are transmissions errors, link state routing protocols use reliable flooding. With reliable flooding, routers use acknowledgements and if necessary retransmissions to ensure that all link state packets are successfully transferred to all neighbouring routers. Thanks to reliable flooding, all routers store in their LSDB the most recent LSP sent by each router in the network. By combining the received LSPs with its own LSP, each router can compute the entire network topology.

Note

Static or dynamic link metrics ?

As link state packets are flooded regularly, routers are able to measure the quality (e.g. delay or load) of their links and adjust the metric of each link according to its current quality. Such dynamic adjustments were included in the ARPANET routing protocol [MRR1979] . However, experience showed that it was difficult to tune the dynamic adjustments and ensure that no forwarding loops occur in the network [KZ1989]. Today’s link state routing protocols use metrics that are manually configured on the routers and are only changed by the network operators or network management tools [FRT2002].

When a link fails, the two routers attached to the link detect the failure by the lack of HELLO messages received in the last  seconds. Once a router has detected a local link failure, it generates and floods a new LSP that no longer contains the failed link and the new LSP replaces the previous LSP in the network. As the two routers attached to a link do not detect this failure exactly at the same time, some links may be announced in only one direction. This is illustrated in the figure below. Router E has detected the failures of link E-B and flooded a new LSP, but router B has not yet detected the failure.

seconds. Once a router has detected a local link failure, it generates and floods a new LSP that no longer contains the failed link and the new LSP replaces the previous LSP in the network. As the two routers attached to a link do not detect this failure exactly at the same time, some links may be announced in only one direction. This is illustrated in the figure below. Router E has detected the failures of link E-B and flooded a new LSP, but router B has not yet detected the failure.

When a link is reported in the LSP of only one of the attached routers, routers consider the link as having failed and they remove it from the directed graph that they compute from their LSDB. This is called the two-way connectivity check. This check allows link failures to be flooded quickly as a single LSP is sufficient to announce such bad news. However, when a link comes up, it can only be used once the two attached routers have sent their LSPs. The two-way connectivity check also allows for dealing with router failures. When a router fails, all its links fail by definition. Unfortunately, it does not, of course, send a new LSP to announce its failure. The two-way connectivity check ensures that the failed router is removed from the graph.

When a router has failed, its LSP must be removed from the LSDB of all routers [1]. This can be done by using the age field that is included in each LSP. The age field is used to bound the maximum lifetime of a link state packet in the network. When a router generates a LSP, it sets its lifetime (usually measured in seconds) in the age field. All routers regularly decrement the age of the LSPs in their LSDB and a LSP is discarded once its age reaches 0. Thanks to the age field, the LSP from a failed router does not remain in the LSDBs forever.

To compute its routing table, each router computes the spanning tree rooted at itself by using Dijkstra’s shortest path algorithm [Dijkstra1959]. The routing table can be derived automatically from the spanning as shown in the figure below.

Footnotes

| [1] | It should be noted that link state routing assumes that all routers in the network have enough memory to store the entire LSDB. The routers that do not have enough memory to store the entire LSDB cannot participate in link state routing. Some link state routing protocols allow routers to report that they do not have enough memory and must be removed from the graph by the other routers in the network. |

Internet Protocol¶

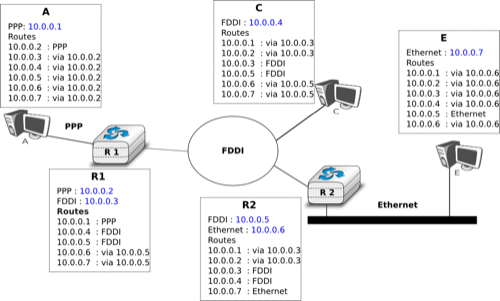

The Internet Protocol (IP) is the network layer protocol of the TCP/IP protocol suite. IP allows the applications running above the transport layer (UDP/TCP) to use a wide range of heterogeneous datalink layers. IP was designed when most point-to-point links were telephone lines with modems. Since then, IP has been able to use Local Area Networks (Ethernet, Token Ring, FDDI, ...), new wide area data link layer technologies (X.25, ATM, Frame Relay, ...) and more recently wireless networks (802.11, 802.15, UMTS, GPRS, ...). The flexibility of IP and its ability to use various types of underlying data link layer technologies is one of its key advantages.

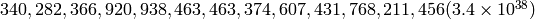

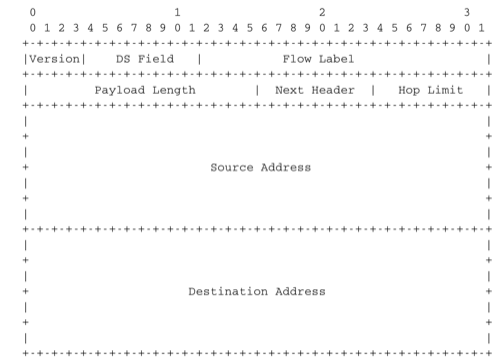

The current version of IP is version 4 specified in RFC 791. We first describe this version and later explain IP version 6, which is expected to replace IP version 4 in the not so distant future.

IP version 4¶

IP version 4 is the data plane protocol of the network layer in the TCP/IP protocol suite. The design of IP version 4 was based on the following assumptions :

- IP should provide an unreliable connectionless service (TCP provides reliability when required by the application)

- IP operates with the datagram transmission mode

- IP addresses have a fixed size of 32 bits

- IP must be usable above different types of datalink layers

- IP hosts exchange variable length packets

The addresses are an important part of any network layer protocol. In the late 1970s, the developers of IPv4 designed IPv4 for a research network that would interconnect some research labs and universities. For this utilisation, 32 bits wide addresses were much larger than the expected number of hosts on the network. Furthermore, 32 bits was a nice address size for software-based routers. None of the developers of IPv4 were expecting that IPv4 would become as widely used as it is today.

IPv4 addresses are encoded as a 32 bits field. IPv4 addresses are often represented in dotted-decimal format as a sequence of four integers separated by a dot. The first integer is the decimal representation of the most significant byte of the 32 bits IPv4 address, ... For example,

- 1.2.3.4 corresponds to 00000001000000100000001100000100

- 127.0.0.1 corresponds to 01111111000000000000000000000001

- 255.255.255.255 corresponds to 11111111111111111111111111111111

An IPv4 address is used to identify an interface on a router or a host. A router has thus as many IPv4 addresses as the number of interfaces that it has in the datalink layer. Most hosts have a single datalink layer interface and thus have a single IPv4 address. However, with the growth of wireless, more and more hosts have several datalink layer interfaces (e.g. an Ethernet interface and a WiFi interface). These hosts are said to be multihomed. A multihomed host with two interfaces has thus two IPv4 addresses.

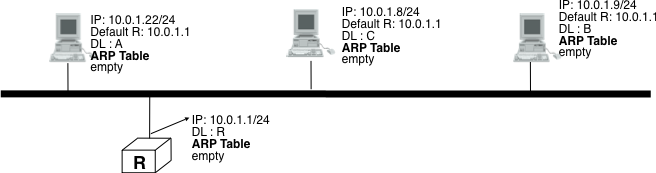

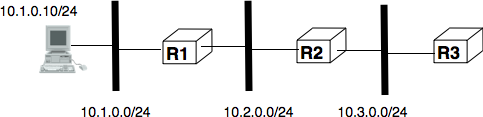

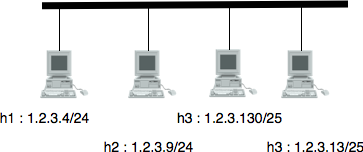

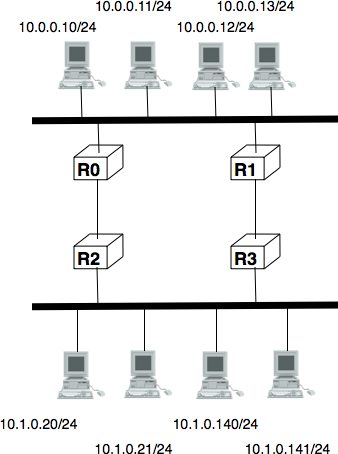

An important point to be defined in a network layer protocol is the allocation of the network layer addresses. A naive allocation scheme would be to provide an IPv4 address to each host when the host is attached to the Internet on a first come first served basis. With this solution, a host in Belgium could have address 2.3.4.5 while another host located in Africa would use address 2.3.4.6. Unfortunately, this would force all routers to maintain a specific route towards each host. The figure below shows a simple enterprise network with two routers and three hosts and the associated routing tables if such isolated addresses were used.

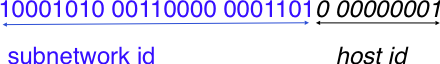

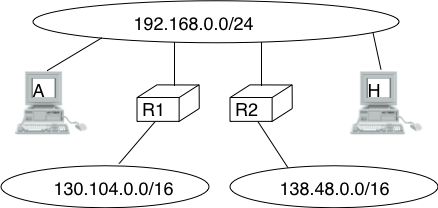

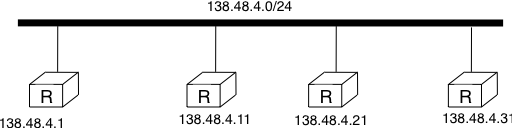

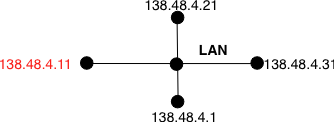

To preserve the scalability of the routing system, it is important to minimize the number of routes that are stored on each router. A router cannot store and maintain one route for each of the almost 1 billion hosts that are connected to today’s Internet. Routers should only maintain routes towards blocks of addresses and not towards individual hosts. For this, hosts are grouped in subnets based on their location in the network. A typical subnet groups all the hosts that are part of the same enterprise. An enterprise network is usually composed of several LANs interconnected by routers. A small block of addresses from the Enterprise’s block is usually assigned to each LAN. An IPv4 address is composed of two parts : a subnetwork identifier and a host identifier. The subnetwork identifier is composed of the high order bits of the address and the host identifier is encoded in the low order bits of the address. This is illustrated in the figure below.

When a router needs to forward a packet, it must know the subnet of the destination address to be able to consult its forwarding table to forward the packet. RFC 791 proposed to use the high-order bits of the address to encode the length of the subnet identifier. This led to the definition of three classes of unicast addresses [2]

| Class | High-order bits | Length of subnet id | Number of networks | Addresses per network |

|---|---|---|---|---|

| Class A | 0 | 8 bits | 128 | 16,777,216 ( ) ) |

| Class B | 10 | 16 bits | 16,384 | 65,536 ( ) ) |

| Class C | 110 | 24 bits | 2,097,152 | 256 ( ) ) |

However, these three classes of addresses were not flexible enough. A class A subnet was too large for most organisations and a class C subnet was too small. Flexibility was added by the introduction of variable-length subnets in RFC 1519. With variable-length subnets, the subnet identifier can be any size, from 1 to 31 bits. Variable-length subnets allow the network operators to use a subnet that better matches the number of hosts that are placed inside the subnet. A subnet identifier or IPv4 prefix is usually [3] represented as A.B.C.D/p where A.B.C.D is the network address obtained by concatenating the subnet identifier with a host identifier containing only 0 and p is the length of the subnet identifier in bits. The table below provides examples of IP subnets.

| Subnet | Number of addresses | Smallest address | Highest address |

|---|---|---|---|

| 10.0.0.0/8 | 16,777,216 | 10.0.0.0 | 10.255.255.255 |

| 192.168.0.0/16 | 65,536 | 192.168.0.0 | 192.168.255.255 |

| 198.18.0.0/15 | 131,072 | 198.18.0.0 | 198.19.255.255 |

| 192.0.2.0/24 | 256 | 192.0.2.0 | 192.0.2.255 |

| 10.0.0.0/30 | 4 | 10.0.0.0 | 10.0.0.3 |

| 10.0.0.0/31 | 2 | 10.0.0.0 | 10.0.0.1 |

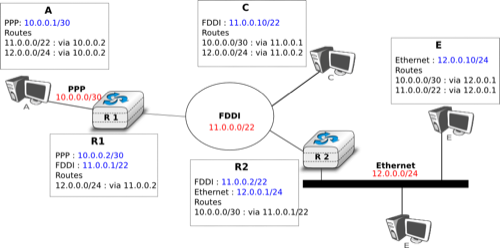

The figure below provides a simple example of the utilisation of IPv4 subnets in an enterprise network. The length of the subnet identifier assigned to a LAN usually depends on the expected number of hosts attached to the LAN. For point-to-point links, many deployments have used /30 prefixes, but recent routers are now using /31 subnets on point-to-point links RFC 3021 or do not even use IPv4 addresses on such links [4].

A second issue concerning the addresses of the network layer is the allocation scheme that is used to allocate blocks of addresses to organisations. The first allocation scheme was based on the different classes of addresses. The pool of IPv4 addresses was managed by a secretariat who allocated address blocks on a first-come first served basis. Large organisations such as IBM, BBN, as well as Stanford or the MIT were able to obtain a class A address block. Most organisations requested a class B address block containing 65536 addresses, which was suitable for most enterprises and universities. The table below provides examples of some IPv4 address blocks in the class B space.

| Subnet | Organisation |

| 130.100.0.0/16 | Ericsson, Sweden |

| 130.101.0.0/16 | University of Akron, USA |

| 130.102.0.0/16 | The University of Queensland, Australia |

| 130.103.0.0/16 | Lotus Development, USA |

| 130.104.0.0/16 | Universite catholique de Louvain, Belgium |

| 130.105.0.0/16 | Open Software Foundation, USA |

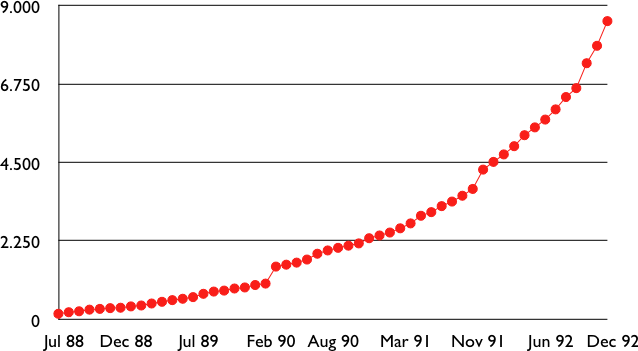

However, the Internet was a victim of its own success and in the late 1980s, many organisations were requesting blocks of IPv4 addresses and started connecting to the Internet. Most of these organisations requested class B address blocks, as class A address blocks were too large and in limited supply while class C address blocks were considered to be too small. Unfortunately, there were only 16,384 different class B address blocks and this address space was being consumed quickly. As a consequence, the routing tables maintained by the routers were growing quickly and some routers had difficulties maintaining all these routes in their limited memory [11].

Evolution of the size of the routing tables on the Internet (Jul 1988- Dec 1992 - source : RFC 1518)

Faced with these two problems, the Internet Engineering Task Force decided to develop the Classless Interdomain Routing (CIDR) architecture RFC 1518. This architecture aims at allowing IP routing to scale better than the class-based architecture. CIDR contains three important modifications compared to RFC 791.

- IP address classes are deprecated. All IP equipment must use and support variable-length subnets.

- IP address blocks are no longer allocated on a first-come-first-served basis. Instead, CIDR introduces a hierarchical address allocation scheme.

- IP routers must use longest-prefix match when they lookup a destination address in their forwarding table

The last two modifications were introduced to improve the scalability of the IP routing system. The main drawback of the first-come-first-served address block allocation scheme was that neighbouring address blocks were allocated to very different organisations and conversely, very different address blocks were allocated to similar organisations. With CIDR, address blocks are allocated by Regional IP Registries (RIR) in an aggregatable manner. A RIR is responsible for a large block of addresses and a region. For example, RIPE is the RIR that is responsible for Europe. A RIR allocates smaller address blocks from its large block to Internet Service Providers RFC 2050. Internet Service Providers then allocate smaller address blocks to their customers. When an organisation requests an address block, it must prove that it already has or expects to have in the near future, a number of hosts or customers that is equivalent to the size of the requested address block.

The main advantage of this hierarchical address block allocation scheme is that it allows the routers to maintain fewer routes. For example, consider the address blocks that were allocated to some of the Belgian universities as shown in the table below.

| Address block | Organisation |

|---|---|

| 130.104.0.0/16 | Universite catholique de Louvain |

| 134.58.0.0/16 | Katholiek Universiteit Leuven |

| 138.48.0.0/16 | Facultes universitaires Notre-Dame de la Paix |

| 139.165.0.0/16 | Universite de Liege |

| 164.15.0.0/16 | Universite Libre de Bruxelles |

These universities are all connected to the Internet exclusively via Belnet. As each university has been allocated a different address block, the routers of Belnet must announce one route for each university and all routers on the Internet must maintain a route towards each university. In contrast, consider all the high schools and the government institutions that are connected to the Internet via Belnet. An address block was assigned to these institutions after the introduction of CIDR in the 193.190.0.0/15 address block owned by Belnet. With CIDR, Belnet can announce a single route towards 193.190.0.0/15 that covers all of these high schools.

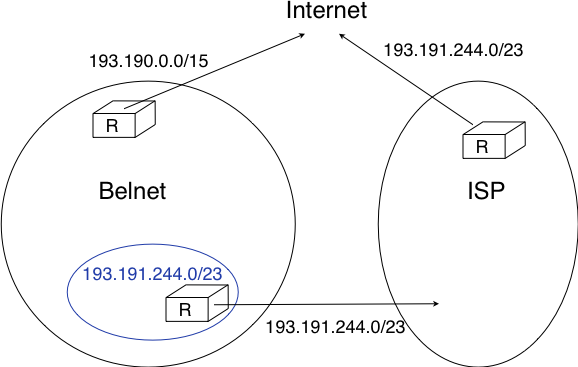

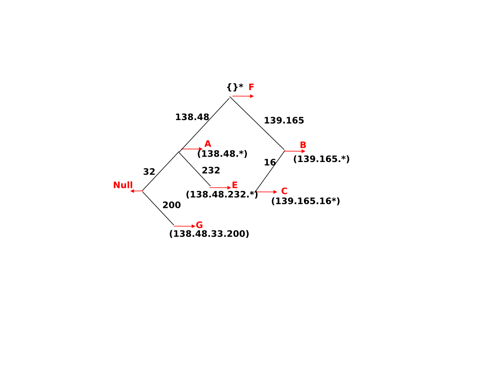

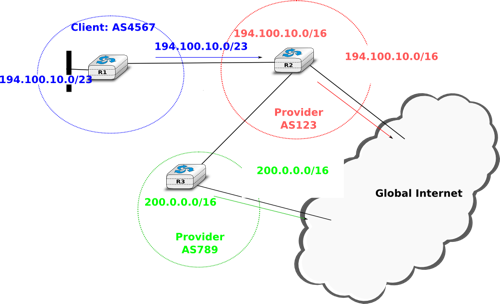

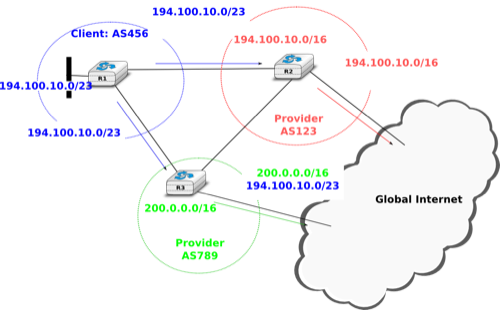

However, there is one difficulty with the aggregatable variable length subnets used by CIDR. Consider for example FEDICT, a government institution that uses the 193.191.244.0/23 address block. Assume that in addition to being connected to the Internet via Belnet , FEDICT also wants to be connected to another Internet Service Provider. The FEDICT network is then said to be multihomed. This is shown in the figure below.

With such a multihomed network, routers R1 and R2 would have two routes towards IPv4 address 193.191.245.88 : one route via Belnet (193.190.0.0/15) and one direct route (193.191.244.0/23). Both routes match IPv4 address 193.191.145.88. Since RFC 1519 when a router knows several routes towards the same destination address, it must forward packets along the route having the longest prefix length. In the case of 193.191.245.88, this is the route 193.191.244.0/23 that is used to forward the packet. This forwarding rule is called the longest prefix match or the more specific match. All IPv4 routers implement this forwarding rule.

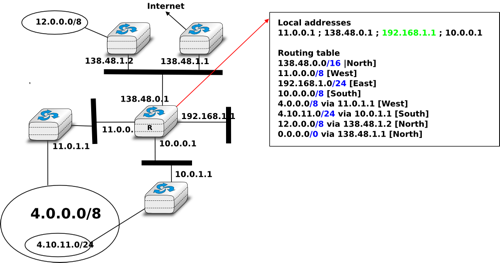

To understand the longest prefix match forwarding, consider the figure below. With this rule, the route 0.0.0.0/0 plays a particular role. As this route has a prefix length of 0 bits, it matches all destination addresses. This route is often called the default route.

- a packet with destination 192.168.1.1 received by router R is destined to the router itself. It is delivered to the appropriate transport protocol.

- a packet with destination 11.2.3.4 matches two routes : 11.0.0.0/8 and 0.0.0.0/0. The packet is forwarded on the West interface.

- a packet with destination 130.4.3.4 matches one route : 0.0.0.0/0. The packet is forwarded on the North interface.

- a packet with destination 4.4.5.6 matches two routes : 4.0.0.0/8 and 0.0.0.0/0. The packet is forwarded on the West interface.

- a packet with destination 4.10.11.254 matches three routes : 4.0.0.0/8, 4.10.11.0/24 and `0.0.0.0/0. The packet is forwarded on the South interface.

The longest prefix match can be implemented by using different data structures. One possibility is to use a trie. The figure below shows a trie that encodes six routes having different outgoing interfaces.

Note

Special IPv4 addresses

Most unicast IPv4 addresses can appear as source and destination addresses in packets on the global Internet. However, it is worth noting that some blocks of IPv4 addresses have a special usage, as described in RFC 5735. These include :

- 0.0.0.0/8, which is reserved for self-identification. A common address in this block is 0.0.0.0, which is sometimes used when a host boots and does not yet know its IPv4 address.

- 127.0.0.0/8, which is reserved for loopback addresses. Each host implementing IPv4 must have a loopback interface (that is not attached to a datalink layer). By convention, IPv4 address 127.0.0.1 is assigned to this interface. This allows processes running on a host to use TCP/IP to contact other processes running on the same host. This can be very useful for testing purposes.

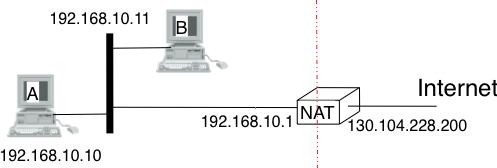

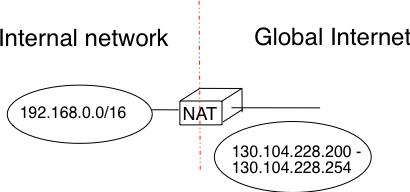

- 10.0.0.0/8, 172.16.0.0/12 and 192.168.0.0/16 are reserved for private networks that are not directly attached to the Internet. These addresses are often called private addresses or RFC 1918 addresses.

- 169.254.0.0/16 is used for link-local addresses RFC 3927. Some hosts use an address in this block when they are connected to a network that does not allocate addresses as expected.

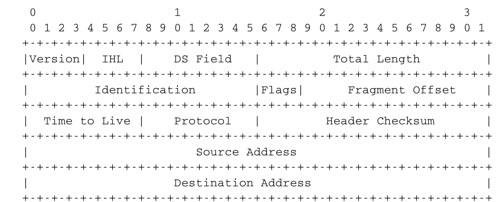

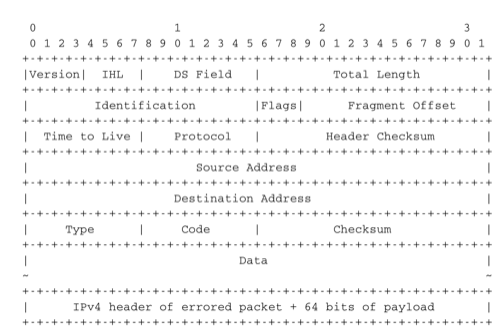

IPv4 packets¶

Now that we have clarified the allocation of IPv4 addresses and the utilisation of the longest prefix match to forward IPv4 packets, we can have a more detailed look at IPv4 by starting with the format of the IPv4 packets. The IPv4 packet format was defined in RFC 791. Apart from a few clarifications and some backward compatible changes, the IPv4 packet format did not change significantly since the publication of RFC 791. All IPv4 packets use the 20 bytes header shown below. Some IPv4 packets contain an optional header extension that is described later.

The main fields of the IPv4 header are :

- a 4 bits version that indicates the version of IP used to build the header. Using a version field in the header allows the network layer protocol to evolve.

- a 4 bits IP Header Length (IHL) that indicates the length of the IP header in 32 bits words. This field allows IPv4 to use options if required, but as it is encoded as a 4 bits field, the IPv4 header cannot be longer than 64 bytes.

- an 8 bits DS field that is used for Quality of Service and whose usage is described later.

- an 8 bits Protocol field that indicates the transport layer protocol that must process the packet’s payload at the destination. Common values for this field [5] are 6 for TCP and 17 for UDP

- a 16 bits length field that indicates the total length of the entire IPv4 packet (header and payload) in bytes. This implies that an IPv4 packet cannot be longer than 65535 bytes.

- a 32 bits source address field that contains the IPv4 address of the source host

- a 32 bits destination address field that contains the IPv4 address of the destination host

- a 16 bits checksum that protects only the IPv4 header against transmission errors

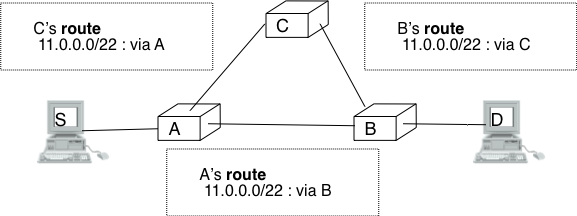

The other fields of the IPv4 header are used for specific purposes. The first is the 8 bits Time To Live (TTL) field. This field is used by IPv4 to avoid the risk of having an IPv4 packet caught in an infinite loop due to a transient or permanent error in routing tables [6]. Consider for example the situation depicted in the figure below where destination D uses address 11.0.0.56. If S sends a packet towards this destination, the packet is forwarded to router B which forwards it to router C that forwards it back to router A, etc.

Unfortunately, such loops can occur for two reasons in IP networks. First, if the network uses static routing, the loop can be caused by a simple configuration error. Second, if the network uses dynamic routing, such a loop can occur transiently, for example during the convergence of the routing protocol after a link or router failure. The TTL field of the IPv4 header ensures that even if there are forwarding loops in the network, packets will not loop forever. Hosts send their IPv4 packets with a positive TTL (usually 64 or more [7]). When a router receives an IPv4 packet, it first decrements the TTL by one. If the TTL becomes 0, the packet is discarded and a message is sent back to the packet’s source (see section ICMP). Otherwise, the router performs a lookup in its forwarding table to forward the packet.

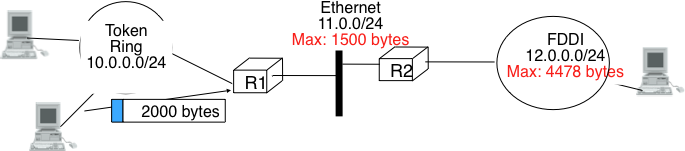

A second problem for IPv4 is the heterogeneity of the datalink layer. IPv4 is used above many very different datalink layers. Each datalink layer has its own characteristics and as indicated earlier, each datalink layer is characterised by a maximum frame size. From IP’s point of view, a datalink layer interface is characterised by its Maximum Transmission Unit (MTU). The MTU of an interface is the largest IPv4 packet (including header) that it can send. The table below provides some common MTU sizes [8].

| Datalink layer | MTU |

| Ethernet | 1500 bytes |

| WiFi | 2272 bytes |

| ATM (AAL5) | 9180 bytes |

| 802.15.4 | 102 or 81 bytes |

| Token Ring | 4464 bytes |

| FDDI | 4352 bytes |

Although IPv4 can send 64 KBytes long packets, few datalink layer technologies that are used today are able to send a 64 KBytes IPv4 packet inside a frame. Furthermore, as illustrated in the figure below, another problem is that a host may send a packet that would be too large for one of the datalink layers used by the intermediate routers.

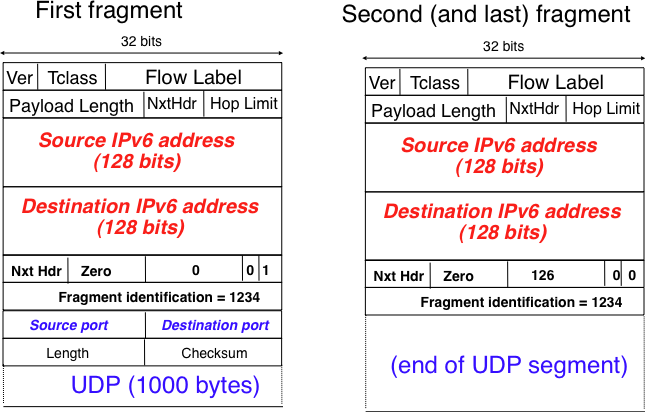

To solve these problems, IPv4 includes a packet fragmentation and reassembly mechanism. Both hosts and intermediate routers may fragment an IPv4 packet if the packet is too long to be sent via the datalink layer. In IPv4, fragmentation is completely performed in the IP layer and a large IPv4 is fragmented into two or more IPv4 packets (called fragments). The IPv4 fragments of a large packet are normal IPv4 packets that are forwarded towards the destination of the large packet by intermediate routers.

The IPv4 fragmentation mechanism relies on four fields of the IPv4 header : Length, Identification, the flags and the Fragment Offset. The IPv4 header contains two flags : More fragments and Don’t Fragment (DF). When the DF flag is set, this indicates that the packet cannot be fragmented.

The basic operation of the IPv4 fragmentation is as follows. A large packet is fragmented into two or more fragments. The size of all fragments, except the last one, is equal to the Maximum Transmission Unit of the link used to forward the packet. Each IPv4 packet contains a 16 bits Identification field. When a packet is fragmented, the Identification of the large packet is copied in all fragments to allow the destination to reassemble the received fragments together. In each fragment, the Fragment Offset indicates, in units of 8 bytes, the position of the payload of the fragment in the payload of the original packet. The Length field in each fragment indicates the length of the payload of the fragment as in a normal IPv4 packet. Finally, the More fragments flag is set only in the last fragment of a large packet.

The following pseudo-code details the IPv4 fragmentation, assuming that the packet does not contain options.

#mtu : maximum size of the packet (including header) of outgoing link

if p.len < mtu :

send(p)

# packet is too large

maxpayload=8*int((mtu-20)/8) # must be n times 8 bytes

if p.flags=='DF' :

discard(p)

# packet must be fragmented

payload=p[IP].payload

pos=0

while len(payload) > 0 :

if len(payload) > maxpayload :

toSend=IP(dest=p.dest,src=p.src,

ttl=p.ttl, id=p.id,

frag=p.frag+(pos/8),

len=mtu, proto=p.proto)/payload[0:maxpayload]

pos=pos+maxpayload

payload=payload[maxpayload+1:]

else

toSend=IP(dest=p.dest,src=p.src,

ttl=p.ttl, id=p.id,

frag=p.frag+(pos/8),

flags=p.flags,

len=len(payload), proto=p.proto)/payload

forward(toSend)

The fragments of an IPv4 packet may arrive at the destination in any order, as each fragment is forwarded independently in the network and may follow different paths. Furthermore, some fragments may be lost and never reach the destination.

The reassembly algorithm used by the destination host is roughly as follows. First, the destination can verify whether a received IPv4 packet is a fragment or not by checking the value of the More fragments flag and the Fragment Offset. If the Fragment Offset is set to 0 and the More fragments flag is reset, the received packet has not been fragmented. Otherwise, the packet has been fragmented and must be reassembled. The reassembly algorithm relies on the Identification field of the received fragments to associate a fragment with the corresponding packet being reassembled. Furthermore, the Fragment Offset field indicates the position of the fragment payload in the original unfragmented packet. Finally, the packet with the More fragments flag reset allows the destination to determine the total length of the original unfragmented packet.

Note that the reassembly algorithm must deal with the unreliability of the IP network. This implies that a fragment may be duplicated or a fragment may never reach the destination. The destination can easily detect fragment duplication thanks to the Fragment Offset. To deal with fragment losses, the reassembly algorithm must bound the time during which the fragments of a packet are stored in its buffer while the packet is being reassembled. This can be implemented by starting a timer when the first fragment of a packet is received. If the packet has not been reassembled upon expiration of the timer, all fragments are discarded and the packet is considered to be lost.

The original IP specification, in RFC 791, defined several types of options that can be added to the IP header. Each option is encoded using a type length value format. They are not widely used today and are thus only briefly described. Additional details may be found in RFC 791.

The most interesting options in IPv4 are the three options that are related to routing. The Record route option was defined to allow network managers to determine the path followed by a packet. When the Record route option was present, routers on the packet’s path had to insert their IP address in the option. This option was implemented, but as the optional part of the IPv4 header can only contain 44 bytes, it is impossible to discover an entire path on the global Internet. traceroute(8), despite its limitations, is a better solution to record the path towards a destination.

The other routing options are the Strict source route and the Loose source route option. The main idea behind these options is that a host may want, for any reason, to specify the path to be followed by the packets that it sends. The Strict source route option allows a host to indicate inside each packet the exact path to be followed. The Strict source route option contains a list of IPv4 address and a pointer to indicate the next address in the list. When a router receives a packet containing this option, it does not lookup the destination address in its routing table but forwards the packet directly to the next router in the list and advances the pointer. This is illustrated in the figure below where S forces its packets to follow the RA-RB-RD path.

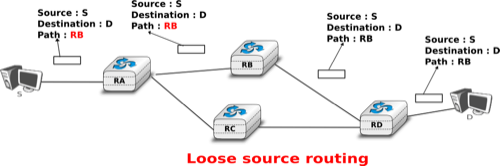

The maximum length of the optional part of the IPv4 header is a severe limitation for the Strict source route option as for the Record Route option. The Loose source route option does not suffer from this limitation. This option allows the sending host to indicate inside its packet some of the routers that must be traversed to reach the destination. This is shown in the figure below. S sends a packet containing a list of addresses and a pointer to the next router in the list. Initially, this pointer points to RB. When RA receives the packet sent by S, it looks up in its forwarding table the address pointed in the Loose source route option and not the destination address. The packet is then forwarded to router RB that recognises its address in the option and advances the pointer. As there is no address listed in the Loose source route option anymore, RB and other downstream routers forward the packet by performing a lookup for the destination address.

These two options are usually ignored by routers because they cause security problems RFC 6274.

ICMP version 4¶

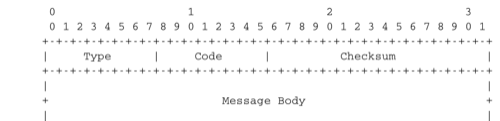

It is sometimes necessary for intermediate routers or the destination host to inform the sender of the packet of a problem that occurred while processing a packet. In the TCP/IP protocol suite, this reporting is done by the Internet Control Message Protocol (ICMP). ICMP is defined in RFC 792. ICMP messages are carried as the payload of IP packets (the protocol value reserved for ICMP is 1). An ICMP message is composed of an 8 byte header and a variable length payload that usually contains the first bytes of the packet that triggered the transmission of the ICMP message.

ICMP version 4 (RFC 792)

In the ICMP header, the Type and Code fields indicate the type of problem that was detected by the sender of the ICMP message. The Checksum protects the entire ICMP message against transmission errors and the Data field contains additional information for some ICMP messages.

The main types of ICMP messages are :

- Destination unreachable : a Destination unreachable ICMP message is sent when a packet cannot be delivered to its destination due to routing problems. Different types of unreachability are distinguished :

- Network unreachable : this ICMP message is sent by a router that does not have a route for the subnet containing the destination address of the packet

- Host unreachable : this ICMP message is sent by a router that is attached to the subnet that contains the destination address of the packet, but this destination address cannot be reached at this time

- Protocol unreachable : this ICMP message is sent by a destination host that has received a packet, but does not support the transport protocol indicated in the packet’s Protocol field

- Port unreachable : this ICMP message is sent by a destination host that has received a packet destined to a port number, but no server process is bound to this port

- Fragmentation needed : this ICMP message is sent by a router that receives a packet with the Don’t Fragment flag set that is larger than the MTU of the outgoing interface

- Redirect : this ICMP message can be sent when there are two routers on the same LAN. Consider a LAN with one host and two routers : R1 and R2. Assume that R1 is also connected to subnet 130.104.0.0/16 while R2 is connected to subnet 138.48.0.0/16. If a host on the LAN sends a packet towards 130.104.1.1 to R2, R2 needs to forward the packet again on the LAN to reach R1. This is not optimal as the packet is sent twice on the same LAN. In this case, R2 could send an ICMP Redirect message to the host to inform it that it should have sent the packet directly to R1. This allows the host to send the other packets to 130.104.1.1 directly via R1.

- Parameter problem : this ICMP message is sent when a router or a host receives an IP packet containing an error (e.g. an invalid option)

- Source quench : a router was supposed to send this message when it had to discard packets due to congestion. However, sending ICMP messages in case of congestion was not the best way to reduce congestion and since the inclusion of a congestion control scheme in TCP, this ICMP message has been deprecated.

- Time Exceeded : there are two types of Time Exceeded ICMP messages

- TTL exceeded : a TTL exceeded message is sent by a router when it discards an IPv4 packet because its TTL reached 0.

- Reassembly time exceeded : this ICMP message is sent when a destination has been unable to reassemble all the fragments of a packet before the expiration of its reassembly timer.

- Echo request and Echo reply : these ICMP messages are used by the ping(8) network debugging software.

Note

Redirection attacks

ICMP redirect messages are useful when several routers are attached to the same LAN as hosts. However, they should be used with care as they also create an important security risk. One of the most annoying attacks in an IP network is called the man in the middle attack. Such an attack occurs if an attacker is able to receive, process, possibly modify and forward all the packets exchanged between a source and a destination. As the attacker receives all the packets it can easily collect passwords or credit card numbers or even inject fake information in an established TCP connection. ICMP redirects unfortunately enable an attacker to easily perform such an attack. In the figure above, consider host H that is attached to the same LAN as A and R1. If H sends to A an ICMP redirect for prefix 138.48.0.0/16, A forwards to H all the packets that it wants to send to this prefix. H can then forward them to R2. To avoid these attacks, hosts should ignore the ICMP redirect messages that they receive.

ping(8) is often used by network operators to verify that a given IP address is reachable. Each host is supposed [9] to reply with an ICMP Echo reply message when its receives an ICMP Echo request message. A sample usage of ping(8) is shown below.

ping 130.104.1.1

PING 130.104.1.1 (130.104.1.1): 56 data bytes

64 bytes from 130.104.1.1: icmp_seq=0 ttl=243 time=19.961 ms

64 bytes from 130.104.1.1: icmp_seq=1 ttl=243 time=22.072 ms

64 bytes from 130.104.1.1: icmp_seq=2 ttl=243 time=23.064 ms

64 bytes from 130.104.1.1: icmp_seq=3 ttl=243 time=20.026 ms

64 bytes from 130.104.1.1: icmp_seq=4 ttl=243 time=25.099 ms

--- 130.104.1.1 ping statistics ---

5 packets transmitted, 5 packets received, 0% packet loss

round-trip min/avg/max/stddev = 19.961/22.044/25.099/1.938 ms

Another very useful debugging tool is traceroute(8). The traceroute man page describes this tool as “print the route packets take to network host”. traceroute uses the TTL exceeded ICMP messages to discover the intermediate routers on the path towards a destination. The principle behind traceroute is very simple. When a router receives an IP packet whose TTL is set to 1 it decrements the TTL and is forced to return to the sending host a TTL exceeded ICMP message containing the header and the first bytes of the discarded IP packet. To discover all routers on a network path, a simple solution is to first send a packet whose TTL is set to 1, then a packet whose TTL is set to 2, etc. A sample traceroute output is shown below.

traceroute www.ietf.org

traceroute to www.ietf.org (64.170.98.32), 64 hops max, 40 byte packets

1 CsHalles3.sri.ucl.ac.be (192.168.251.230) 5.376 ms 1.217 ms 1.137 ms

2 CtHalles.sri.ucl.ac.be (192.168.251.229) 1.444 ms 1.669 ms 1.301 ms

3 CtPythagore.sri.ucl.ac.be (130.104.254.230) 1.950 ms 4.688 ms 1.319 ms

4 fe.m20.access.lln.belnet.net (193.191.11.9) 1.578 ms 1.272 ms 1.259 ms

5 10ge.cr2.brueve.belnet.net (193.191.16.22) 5.461 ms 4.241 ms 4.162 ms

6 212.3.237.13 (212.3.237.13) 5.347 ms 4.544 ms 4.285 ms

7 ae-11-11.car1.Brussels1.Level3.net (4.69.136.249) 5.195 ms 4.304 ms 4.329 ms

8 ae-6-6.ebr1.London1.Level3.net (4.69.136.246) 8.892 ms 8.980 ms 8.830 ms

9 ae-100-100.ebr2.London1.Level3.net (4.69.141.166) 8.925 ms 8.950 ms 9.006 ms

10 ae-41-41.ebr1.NewYork1.Level3.net (4.69.137.66) 79.590 ms

ae-43-43.ebr1.NewYork1.Level3.net (4.69.137.74) 78.140 ms

ae-42-42.ebr1.NewYork1.Level3.net (4.69.137.70) 77.663 ms

11 ae-2-2.ebr1.Newark1.Level3.net (4.69.132.98) 78.290 ms 83.765 ms 90.006 ms

12 ae-14-51.car4.Newark1.Level3.net (4.68.99.8) 78.309 ms 78.257 ms 79.709 ms

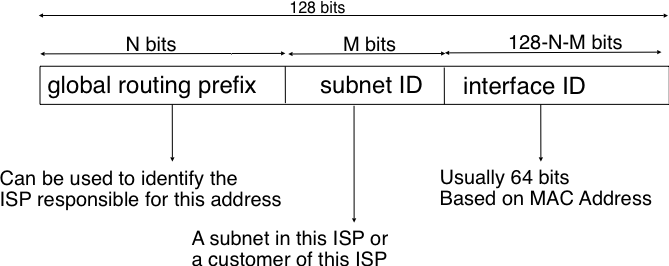

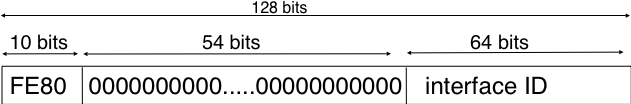

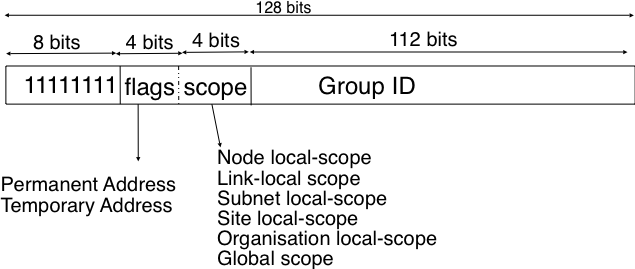

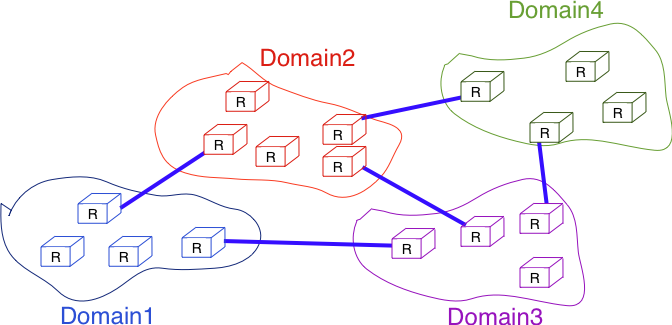

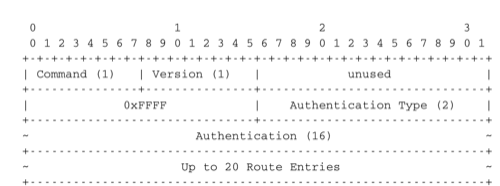

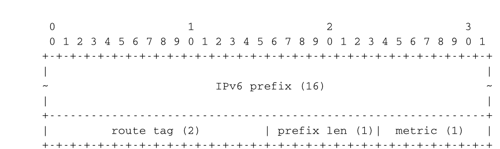

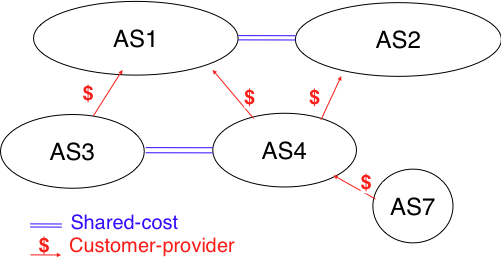

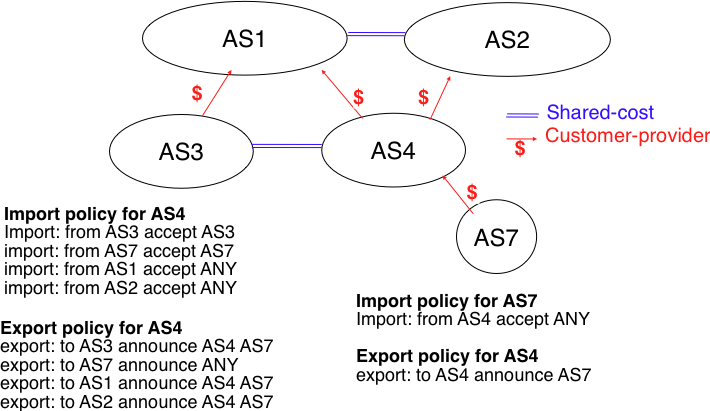

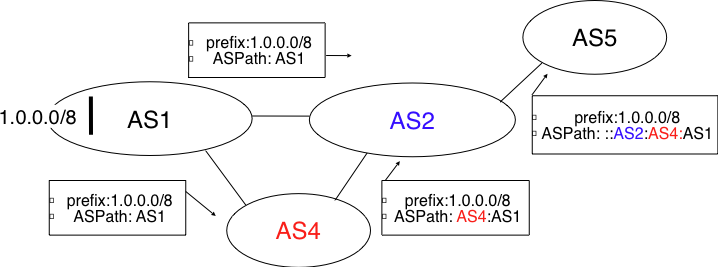

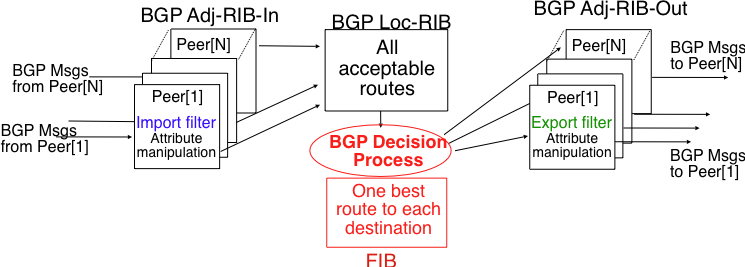

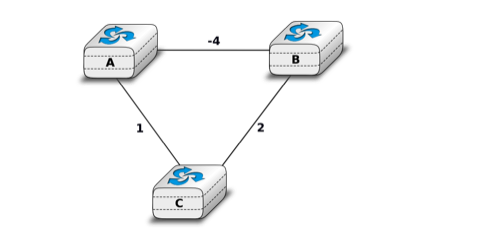

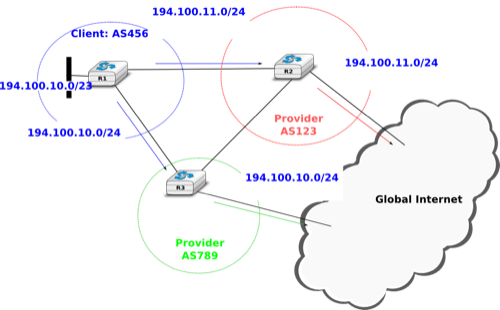

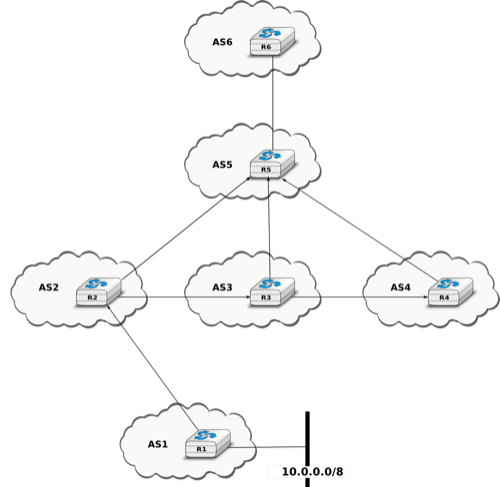

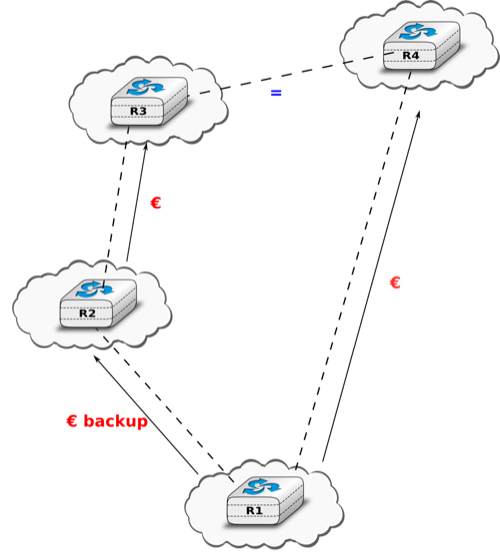

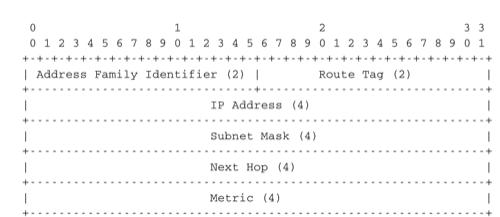

13 ex1-tg2-0.eqnwnj.sbcglobal.net (151.164.89.249) 78.460 ms 78.452 ms 78.292 ms